Optimieren von Workflows mit In-Memory und nativ kompilierten Objekten im SQL Server

– oder wie es nicht funktioniert

Beim Optimieren von Datenzugangs-Code mit den In-Memory-Funktionalitäten bei Microsoft SQL Server (memory-optimierte Tabellen + Indexe, memory-optimierte tabellengewertete Parameter und nativ kompilierte gespeicherte Prozeduren) wird man oftmals feststellen, dass man manchmal einen Schritt nach vorn und dann wieder einen rückwärts macht.

Wie so oft steckt der Teufel im Detail.

Nehmen wir ein Beispiel-Szenario einer gespeicherten Prozedur, die einen Workflow hat, die wie folgt zusammengefasst werden kann:

- Eine temporäre Tabelle erstellen (#)

- Einen Datensatz einfügen, ~200.000 Zeilen

- Die Temptable aktualisieren, unter Verwendung eines Joins zu einer (festplattenbasierter) Tabellen (Dimension)

- Die Temptable weiter aktualisieren

- Einige Zeilen löschen

- Die übrigen Daten in eine Ziel-Tabelle (Fact) (festplattenbasiert) einfügen

Dieses Muster ist bei ETL-Szenarien nicht so ungewöhnlich, wenn man Daten in Datawarehouse Fact-Tabellen lädt, aber können in vielen anderen Kontexten verwendet werden.

Jetzt könnte man versucht sein, die Performance zu verbessern, indem man eine Memory-optimierten Table Valued Parameter (TVP) anstelle der Temptable verwendet.

- Eine memory-optimierte TVP erstellen (@)

- Einen Datensatz einfügen, ~200.000 Zeilen

- Die TVP aktualisieren, indem man sie mit einer anderen, festplattenbasierten Tabelle joint

- Die TVP weiter aktualisieren

- Einige Zeilen löschen

- Die übrigen Daten in eine Ziel-Tabelle (festplattenbasiert) einfügen

Die Idee dahinter ist, dass man in der XTP-Engine ohne Locks arbeiten kann, sogar ohne Latches, und in bestimmten Szenarien noch weiter ohne jegliche IO. (Hier ein bisschen mehr dazu: The SQL Server 2016. In-Memory Evolution – from row-store to columnstore to in-memory operational analytics, SQL Server 2016 SP1 removes the editions’ programming feature limits! Everything from Always Encrypted to XTP/In-memory for everybody!)

Das Konzept wäre, einfach die Temporäre Tabelle mit einer Memory-Optimierten TVP zu ersetzen.

Doch das Ergebnis könnte euch verblüffen: Es kann ein bisschen schneller werden oder auch nicht – oder sogar ein bisschen langsamer!

Wenn eine zweite Tabelle beteiligt ist (d.h. eine Dimension verknüpfen), dann sollten wir vielleicht auch diese zweite Tabelle memory-optimiert vorbereiten.

Aber in meinem Fall werdet ihr immer noch sehen, es wird sogar noch langsamer!

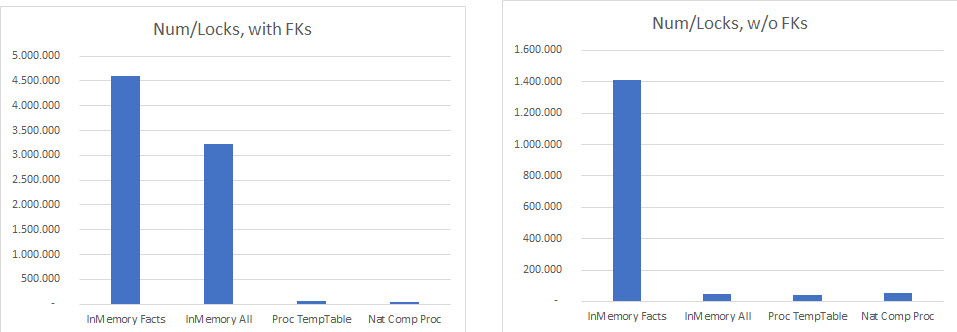

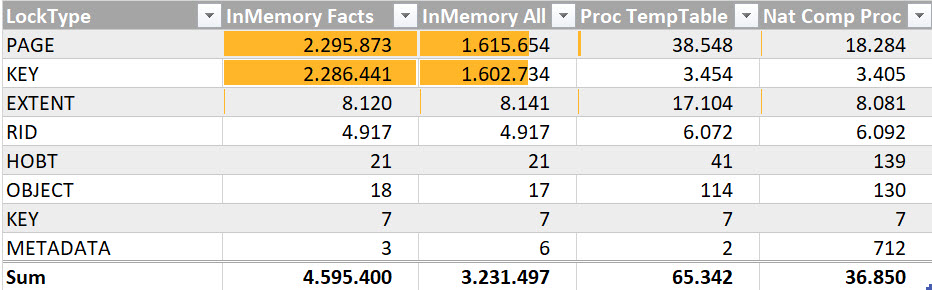

Das Problem liegt unter anderem in der Menge von Sperren, die noch beteiligt sind:

Warum in aller Welt haben wir auf einmal MEHR Sperren/Locks, wenn wir memory-optimierte Tabellen verwenden?

Die Antwort liegt in der Tatsache, dass wir, um die Daten in die memory-optimierten Tabellen zu bekommen, sie aus den festplattenbasierten Tabellen auslesen und am Ende zurückschreiben müssen (in meinem Fall sogar unterstützt durch ein Clustered Columnstore Index – vergeblich…). Umso mehr, wenn sich die memory-optimierte Tabelle/TVP mit der immer noch festplattenbasierten Dimension-Tabelle verknüpft.

Wenn man die Fremdschlüssel während dieses Prozesses deaktiviert oder entfernt, wird man eine gleiche Performance-Verbesserung für all die getesteten Herangehensweisen sehen.

Um also wirklich eine erkennbare Performance-Verbesserung zu erhalten, gibt es leider in vielen Fällen keinen „schnellen Gewinn“.

Das liegt daran, dass, wie beschrieben, das Bottleneck “Festplatte” nicht einfach entfernt wird, sondern immer noch bestehen bleibt im Interop-Modus. Alle Interaktionen zwischen der In-Memory-Engine und der Standard-Seitenbasierten-Engine verlangsamen eure Arbeit. Ihr müsst dies also wirklich soweit wie möglich eliminieren. Aus diesem Grund sage ich manchmal: „Um wirklich von In-Memory zu profitieren, müsst ihr eure Prozesse neu designen.“

Das bedeutet: Bringt euren Workflow und euer Schema(!) weit möglichst komplett in In-Memory!

Wenn ihr einmal diese Etappe gemeistert habt, werdet ihr mit der Fähigkeit belohnt, nativ kompilierte gespeicherte Prozeduren verwenden zu können.

Bereits ihrer Natur nach können diese nicht mit On-Disk/seitenbasierten Tabellen funktionieren und von extrem optimiertem kompiliertem Code profitieren, die nie wieder neukompiliert werden, es sei denn, ihr wollt es ausdrücklich.

Auf dem Weg zum Ziel werdet ihr sicherlich auf eine der vielen immer noch existierenden Einschränkungen der XTP-Engine stoßen, die nicht dieselbe T-SQL-Oberfläche wie die seit Jahrzehnten entwickelte Standard-Engine unterstützt.

Aber das wird in jeder Version besser. Bei SQL Server 2016 werdet ihr höchstwahrscheinlich von Fehlern wie diese behindert:

Msg 12311, Level 16, State 39, Procedure ProcedureName, Line 73 [Batch Start Line 11]

Unterabfragen (innerhalb einer anderen Abfrage geschachtelte Abfragen) werden nur in SELECT-Anweisungen mit nativ kompilierten Prozeduren unterstützt.

Msg 12319, Level 16, State 81, Procedure ProcedureName, Line 64 [Batch Start Line 11]

Das Verwenden der FROM-Klausel in einer UPDATE-Anweisung und das Angeben einer Tabellenquelle in einer DELETE-Anweisung wird bei nativ kompilierten Prozeduren nicht unterstützt.Msg 10775, Level 16, State 1, Procedure ProcedureName, Line 36 [Batch Start Line 11]

Das Objekt ‚SchemaName.TableName‘ ist keine speicheroptimierte Tabelle oder keine systemintern kompilierte Inline-Tabellenwertfunktion, und es kann nicht von einem systemintern kompilierten Modul darauf zugegriffen werden.

Und einige mehr …

Wenn ihr das einmal mit cleverem Neudesign bewältigt habt, werdet ihr endlich vom wahren Potential von In-Memory profitieren, wie die ~50% Performance-Verbesserung in meinem Beispiel-ETL-Workflow.

Der optimierte Workflow geht so:

- Eine permanent verfügbare memory-optimierte Tabelle mit SCHEMA_ONLY vorbereiten

- Den Datensatz einfügen, bevor man die nativ kompilierte gespeicherte Prozedur startet

- Für das Insert schön die zweite Tabelle verknüpfen und die nötigen Daten/Spalten integrieren, um einen zweiten Insert zu vermeiden

- In der nativ kompilierten gespeicherten Prozedur: die memory-optimierte Tabelle aktualisieren, indem man einfache Statements ohne Subselects oder Verknüpfungen verwendet

- Einige Zeilen direkt in der Prozedur löschen

- Wieder außerhalb der nativ gespeicherten Prozedur: die restlichen Daten in die Ziel-Tabelle (festplattenbasiert) einfügen

Die Begrenzung ist immer noch die festplattenbasierte Engine, wie man in diesem Query-Plan des finalen Codes des finalen Inserts sehen kann:

Die finalen Ergebnisse und Performance lassen sich wie folgt vergleichen:

Es gibt noch viel mehr in einer solchen POC zu untersuchen, was aber über den Zweck dieses Artikels hinausgeht.

Zum Beispiel wird man vielleicht die brutal erhöhte Menge von Locks im Interop-Modus gegenüber Standard-T-SQL bemerken – verdächtig identisch zur Anzahl der Zeilen, die durchlaufen werden…

Ich hoffe, meine lieben Leser nehmen dies als Motivation, dass man, auch wenn der Weg steinig sein mag, erstaunliche Ergebnisse mit der In-Memory-Engine im SQL Server erhalten kann. Bei den Memory-Größen, die man heute für vergleichbar wenig Geld (man denke an 1.5TB pro Socket) kaufen kann, warte ich auf den Tag, an dem die meisten Daten einfach von Beginn an In-Memory sein werden. Und diejenigen von euch, die über diese neuen Paradigmen lernen werden, werden die ersten sein, die davon profitieren.

Wenn ihr diesen Sommer in Südasien seid, seid ihr eingeladen, zu meiner ganztägigen PreCon zu In-Memory-Technologien im SQL Server auf dem Data Platform Summit in Bangalore, Indien, am 16. August zu kommen:

Hier ein Direktlink: http://dataplatformgeeks.com/dps2017/pre-conference-seminars/

Happy memory-optimizing

Andreas

Hinterlasse einen Kommentar

An der Diskussion beteiligen?Hinterlasse uns deinen Kommentar!