When DROP USER Doesn’t Fully Drop Access: Stale EXTERNAL MODEL Permissions in SQL Server’s AI Integrations

Why deterministic security matters

Unlike areas such as performance tuning, where partial improvements or probabilistic outcomes may still provide value, security controls must behave predictably and consistently. The same configuration, permission, or revocation should always produce the same security outcome.

When identity or permission boundaries behave inconsistently – even under specific lifecycle conditions – that is more than a product bug. It becomes a security-relevant design issue, because security depends not just on how access is granted, but also on how reliably it is revoked.

While investigating the new permission model introduced alongside SQL Server 2025’s AI integration and vector search capabilities (Article: New Permissions in SQL Server 2025), I encountered a case where EXTERNAL MODEL permissions can persist after a user is dropped, creating stale authorization state.

Expected deprovisioning behavior

One of the fundamental expectations that DBAs have is that when accounts are dropped, associated permission-assignments are removed as well.

- Drop principal

- Associated permissions removed from metadata

That assumption is foundational to deprovisioning, least privilege, and access governance.

Observed behavior

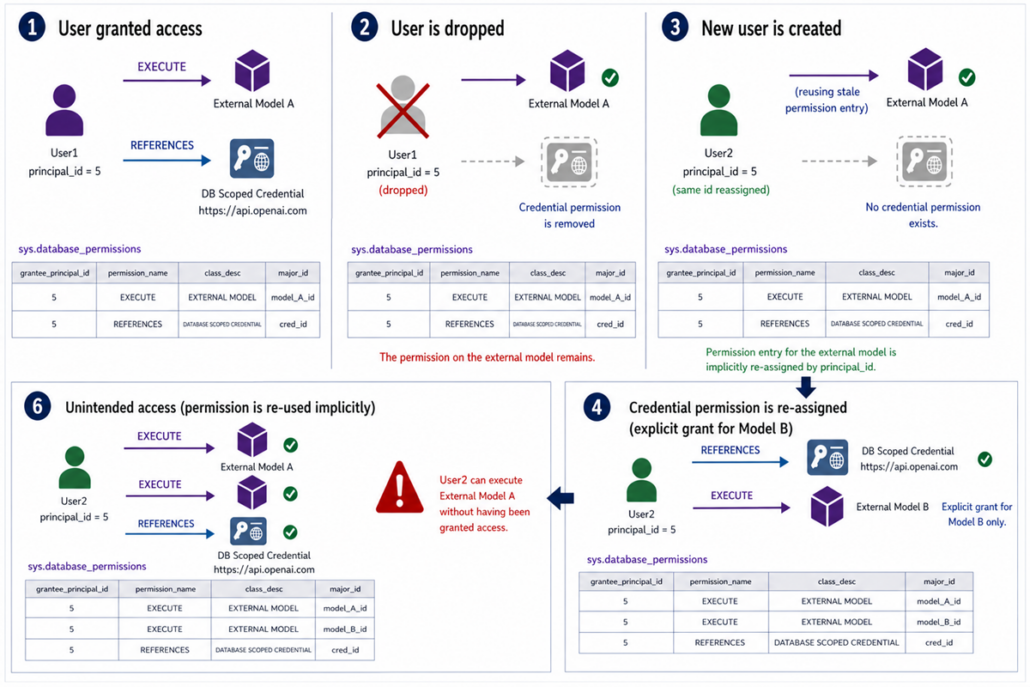

In testing the new EXTERNAL MODEL object to register external AI models such as OpenAI within SQL Server, I observed a permission lifecycle bug:

When a user granted EXECUTE on an external model is dropped, the permission entry remains in sys.database_permissions – together with the original principal_id.

Important background information

In SQL Server, newly created principals are assigned the lowest available principal_id.

And because newly created principals by design receive the lowest available principal_id, this stale permission can effectively become associated with a newly created principal that inherits the reused principal_id.

What this does – and does not – mean

This does not automatically mean the new principal can execute the model immediately.

To successfully use the external model, the new principal must also have the REFERENCES permission on the database scoped credential used by that model.

No automatic privilege escalation: credential permission remains a prerequisite.

However, if the new principal is later granted access to the same credential for another legitimate external model, the stale permission on the old model may become usable as well.

How unintended access can emerge

Let’s say there are multiple external models in play, each for separate use cases with different confidentiality or governance requirements.

A principal may gain access to an external model it was never explicitly granted, because:

- Old permission rows persist

- principal_id is reused

- Credential access overlaps

Stale-permission spread:

If the newly created principal is a database role rather than an individual user, this stale permission will be passed on to potentially multiple users through role membership.

Practical example

Create User1:

- Granted EXECUTE on MyOpenAIModel1

- Granted REFERENCES to Credential A

Drop User1:

- Principal removed

- External model permission persists

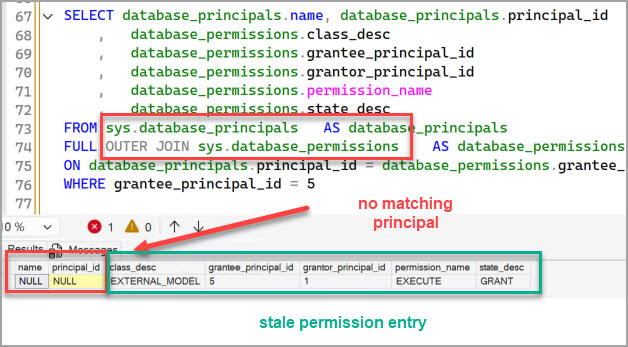

Here is a screenshot of the stale permission entry in the system object sys.database_permissions:

Create User2 or RoleX:

- Receives lowest available principal_id – in this case of former User1

- Granted Credential A for a different model

Result:

User2 / RoleX may now unexpectedly execute MyOpenAIModel1 as well, even though the stale permission row was never intentionally granted to User2 / RoleX.

Here is a visual of what is happening:

Security and governance implications

Identity lifecycle and authorization hygiene

Identity lifecycle and authorization hygiene matter because real-world security controls depend on predictable deprovisioning.

Confidentiality

If the old model points to sensitive AI endpoints, proprietary workflows, or regulated processing paths, unintended reassignment could expose capabilities beyond intended authorization.

Governance

As newer securable classes are introduced, permission lifecycle consistency becomes increasingly important for governance:

- Joiner / mover / leaver controls (JML)

- Least privilege assumptions

- Permission reviews

- Audit expectations

Why AI integrations raise the stakes

External models are not ordinary static table objects.

They may:

- Connect to OpenAI / Azure OpenAI endpoints

- Use a different AI model inference endpoint over time (the EXTERNAL MODEL object in SQL Server explicitly allows for that flexibility)

- Process sensitive enterprise text

- Support embeddings or downstream automation

- Interact with governed AI workflows

That makes stale permission persistence more consequential than a simple metadata inconsistency.

Practical risk reduction

Microsoft MSRC acknowledged this behavior as a bug and assigned it low severity, which may affect remediation prioritization.

Given that External Models are database-scoped objects, you cannot use schema-level permissions as a workaround.

One best practice may help reduce risk: assign permissions to roles rather than directly to users.

Grant permissions to database roles and drop users. The role will remain in the system, together with the permission which is expected behavior.

However, this does not fully solve permission removal if the role itself should later be decommissioned. In that case, dropping the external model may still be required.

Final thought

Historically, SQL Server has maintained strong consistency around principal and permission cleanup, which makes this deviation on a newer securable type somewhat unusual.

Security is defined not only by how effectively access is granted, but by how reliably it is revoked. As SQL Server expands into newer AI-driven securables, permission lifecycle consistency should remain just as foundational as the access controls themselves.

Andreas

Leave a Reply

Want to join the discussion?Feel free to contribute!